Home » 2019

Yearly Archives: 2019

How the limits of the mind shape human language

(Rebogged from The Conversation)

When we speak, our sentences emerge as a flowing stream of sound. Unless we are really annoyed, We. Don’t. Speak. One. Word. At. A. Time. But this property of speech is not how language itself is organised. Sentences consist of words: discrete units of meaning and linguistic form that we can combine in myriad ways to make sentences. This disconnect between speech and language raises a problem. How do children, at an incredibly young age, learn the discrete units of their languages from the messy sound waves they hear?

Over the past few decades, psycholinguists have shown that children are “intuitive statisticians”, able to detect patterns of frequency in sound. The sequence of sounds rktr is much rarer than intr. This means it is more likely that intr could occur inside a word (interesting, for example), while rktr is likely to span two words (dark tree). The patterns that children can be shown to subconsciously detect might help them figure out where one word begins and another ends.

One of the intriguing findings of this work is that other species are also able to track how frequent certain sound combinations are, just like human children can. In fact, it turns out that that we’re actually worse at picking out certain patterns of sound than other animals are.

Linguistic rats

One of the major arguments in my new book, Language Unlimited, is the almost paradoxical idea that our linguistic powers can come from the limits of the human mind, and that these limits shape the structure of the thousands of languages we see around the world.

One striking argument for this comes from work carried out by researchers led by Juan Toro in Barcelona over the past decade. Toro’s team investigatedwhether children learned linguistic patterns involving consonants better than those involving vowels, and vice versa.

They showed that children quite easily learned a pattern of nonsense words that all followed the same basic shape: you have some consonant, then a particular vowel (say a), followed by another consonant, that same vowel, yet one more consonant, and finally a different vowel (say e). Words that follow this pattern would be dabale, litino, nuduto, while those that break it are dutone, bitado and tulabe. Toro’s team tested 11 month old babies, and found that the kids learned the pattern pretty well.

But when the pattern involved changes to consonants as opposed to vowels, the children just didn’t learn it. When they were presented with words like dadeno, bobine, and lulibo, which have the same first and second consonant but a different third one, the children didn’t see this as a rule. Human children found it far easier to detect a general pattern involving vowels than one involving consonants.

The team also tested rats. The brains of rats are known to detect and processdifferences between vowels and consonants. The twist is that the rat brains were too good: the rats learned both the vowel rule and the consonant rule easily.

Children, unlike rats, seem to be biased towards noticing certain patterns involving vowels and against ones involving consonants. Rats, in contrast, look for patterns in the data of any sort. They aren’t limited in the patterns they detect, and, so they generalise rules about syllables that are invisible to human babies.

This bias in how our minds are set up has, it seems, influenced the structure of actual languages.

Impossible languages

We can see this by looking at the Semitic languages, a family that includes Hebrew, Arabic, Amharic and Tigrinya. These languages have a special way of organising their words, built around a system where each word can be defined (more or less) by its consonants, but the vowels change to tell you something about the grammar.

For example, the Modern Hebrew word for “to guard” is really just the three consonant sounds sh-m-r. To say, “I guarded”, you put the vowels a-a in the middle of the consonants, and add a special suffix, giving shamarti. To say “I will guard”, you put in completely different vowels, in this case e-o and you signify that it’s “I” doing the guarding with a prefixed glottal stop giving `eshmor. The three consonants sh-m-r are stable, but the vowels change to make past or future tense.

We can also see this a bit in a language like English. The present tense of the verb “to ring” is just ring. The past is, however, rang, and you use yet a different form in The bell has now been rung. Same consonants (r-ng), but different vowels.

Our particularly human bias to store patterns of consonants as words may underpin this kind of grammatical system. We can learn grammatical rules that involve changing the vowels easily, so we find languages where this happens quite commonly. Some languages, like the Semitic ones, make enormous use of this. But imagine a language that is the reverse of Semitic: the words are fundamentally patterns of vowels, and the grammar is done by changing the consonants around the vowels. Linguists have never found a language that works like this.

We could invent a language that worked like this, but, if Toro’s results hold up, it would be impossible for a child to learn naturally. Consonants anchor words, not vowels. This suggests that our particularly human brains are biased towards certain kinds of linguistic patterns, but not towards other equally possible ones, and that this has had a profound effect on the languages we see across the world.

Charles Darwin once said that human linguistic abilities are different from those of other species because of the higher development of our “mental powers”. The evidence today suggests it is actually because we have different kinds of mental powers. We don’t just have more oomph than other species, we have different oomph.

Where Do the Rules of Grammar Come From?

Reblogged from Psychology Today

When we speak our native language we unconsciously follow certain rules. These rules are different in different languages. For example, if I want to talk about a particular collection of oranges, in English I say

Those three big oranges

In Thai, however, I’d say

Sôm jàj sǎam-lûuk nán

This is, literally, Oranges big three those, the reverse of the English order. Both English and Thai are pretty strict about this, and if you mess up the order, speakers will say that you’re not speaking the language fluently.

The rule of course doesn’t mention particular words, like big, or nán, it applies to whole classes of words. These classes are what linguists call grammatical categories: things like Noun, Verb, Adjective and less familiar ones like Numeral (for words like three or five) and Demonstrative (the term linguists use for words like this and those). Adjectives, Numerals and Demonstratives give extra information about the noun, and linguists call these words modifiers. The rules of grammar tell us the order of these whole classes of words.

Where do these rules come from? The common-sense answer is that we learn them: as English speakers grow up, they hear the people around them saying many, many sentences, and from these they generalize the English order; Thai speakers, hearing Thai sentences, generalize the opposite order (and English-Thai bilinguals get both). That’s why, if you present an English or Thai speaker with the wrong order, they’ll immediately detect something is wrong.

But this common-sense answer raises an interesting puzzle. The rules in English, from this kind of perspective, look roughly as follows:

The normal order of words that modify nouns is:

- Demonstratives precede Numerals, Adjectives and Nouns

- Numerals precede Adjectives and Nouns

- Adjectives precede Nouns

There are 24 possible ways of ordering the four grammatical categories. If the order in different languages is simply a result of speakers learning their languages, then we’d expect to see all 24 possibilities available in languages around the world. Everything else being equal, we’d also expect the various orders to be pretty much equally common.

However, in the 1960s, the comparative linguist, Joseph Greenberg, looked at a bunch of unrelated languages and found that this simply wasn’t true. Some orders were much more common than others, and some orders simply didn’t seem to be present in languages at all. More recent work by Matthew Dryer looking at a much larger collection of languages has confirmed Greenberg’s original finding. It turns out that the English and Thai orders are by far the most common, while other orders—such as that of Kîîtharaka, a Bantu language spoken in Kenya, in which you say something like Oranges those three big—are much rarer. Dryer, like Greenberg, found that some orders just didn’t exist at all.article continues after advertisement

This is a surprise if speakers learn the order of words from what they hear (which they surely do), and each order is just as learnable as the others. It suggests either that some historical accident has led to certain orders being more frequent in the world’s languages, or, alternatively, that the human mind somehow prefers some orders to others, so these are the ones that, over time, end up being more common.

To test this, my colleagues Jennifer Culbertson, Alexander Martin, Klaus Abels, and I are running a series of experiments. In these, we teach people an invented language, with specially designed words that are easily learnable, whether the native language of our speakers is English, Thai or Kîîtharaka. We call this language Nápíjò and we tell the people who are doing the experiments that it’s a language spoken by about 10,000 people in rural South-East Asia. This is to make Nápíjò as real as possible to people.

Though we keep the words in Nápíjò the same for all our speakers, we mess around with the order of words. When we test English speakers, we teach them a version of Nápíjò with the Adjectives, Numerals and Demonstratives after the Noun (unlike in English); we also teach Nápíjò to Thai speakers, but we use a version with all these classes of words before the noun (unlike in Thai).

The idea behind the experiments is this: We teach speakers some Nápíjò, enough so they know the order of the noun and the modifiers, but not enough so they know how the modifiers are ordered with respect to each other. Then we have them guess the order of the modifiers. Their guesses tell us about what kind of grammar rule they are learning, and the pattern of the guesses should tell us something about whether that grammar rule is coming from their native language, or from something deeper in their minds.article continues after advertisement

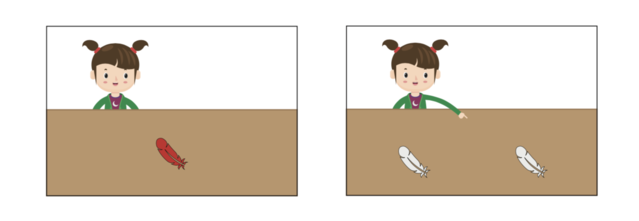

In the experiments, we show speakers pictures of various situations–for example, a girl pointing to a collection of feathers—and we give our speakers the Nápíjò phrase that corresponds to these. The Nápíjò phrases that the participants hear for the diagrams below would correspond to red feather on the left and to that feather, on the right. We give the speakers enough examples so that they learn the pattern.

Red Feather and That Feather

Once the participants in the experiment have learned the pattern, we then test to see what they would do when you have two modifiers: for example, an Adjective and a Numeral, or a Demonstrative and an Adjective. We have of course, somewhat evilly, not given our speakers any clue as to what they should do. They have to guess!

Now, if the speakers are simply using the order of words from their native language, then they should be more likely to guess that the order of words in Nápíjò would follow it. That’s not, however, what English or Thai speakers do.

For a picture that shows a girl pointing at “those red feathers”, English speakers don’t guess that the Nápíjò order is Feathers those red, which would keep the English order of modifiers but is very rare cross-linguistically when the noun comes first. Instead, they are far more likely to go for Feathers red those, which is very common cross-linguistically (it is the Thai order), but is definitely not the English order of the modifiers.article continues after advertisement

Thai speakers, similarly, didn’t keep the Thai order of Adjective and Demonstrative. Instead, they were more likely to guess the English order, which, when the noun comes last (as it did for them), is very common across languages.

This suggests that the patterns of language types discovered by Greenberg, and put on an even firmer footing by Dryer, may not be the result of chance or of history. Rather the human mind may have an inherent bias towards certain word orders over others.

Our experiments are not quite finished, however. Both English and Thai speakers use patterns in their native language that are very common in languages generally. But what would happen if we tested speakers of one of the very rare patterns? Would even they still guess that Nápíjò uses an order which is more frequent in languages of the world?

The U.K. team with local team member Patrick Kanampiu

To find out, we’ve just arrived in Kenya, where we will do a final set of experiments. We’ll test speakers of Kîîtharaka (where the order is roughly Oranges those three big). We’ll test them on a version of Nápíjò where the noun comes last, unlike in their own language. If they behave just like Thai speakers, who we also taught a version of Nápíjò where the noun comes last, we will have really strong evidence that the linguistic nature of the human mind shapes the rules we learn. Who knows though, science is, after all, a risky business!

(Many thanks to my co-researchers, Jenny Culbertson (Principal Investigator), Alexander Martin and Klaus Abels for comments, and to the UK Economic and Social Research Council for funding this research)

What Invented Languages Can Tell Us About Human Language

(Reblogged from Psychology Today)

Hash yer dothrae chek asshekh?

This is how you ask someone how they are in Dothraki, one of the languages David Peterson invented for the phenomenally successful Game of Thrones series. It is an idiom in Peterson’s constructed language, meaning, roughly “Do you ride well today?” It captures the importance of horse-riding to the imaginary warriors of the land of Essos in the series.

Invented, or constructed languages, are definitely coming into their own. When I was a young nerd, about the age of eleven or twelve, I used to make up languages. Not very good ones, as I knew almost nothing then about how languages work. I just invented words that I thought looked cool on the page (lots of xs and qs!), and used these in place of English words.

I wasn’t the only person that did this. J.R.R Tolkien wrote an essay with the alluring title of A Secret Vice, all about his love of creating languages: their words, their sounds, their grammar and their history, and the internet has created whole communities of ‘conlangers’, sharing their love of invented languages, as Arika Okrent documents in her book In the Land of Invented Languages.

For the teenage me, what was fascinating was how creating a language opened up worlds of the imagination and how it allowed me to create my own worlds. I guess it’s not surprising that I eventually ended up doing a PhD in Linguistics. Those early experiments with inventing languages made me want to understand how real languages work. So I stopped creating my own languages and, over the last three decades, researched how Gaelic, Kiowa, Hawaiian, Kiitharaka, and many other languages work.

A few years back, however, I was asked by a TV producer to create some languages for a TV series, Beowulf, and that reinvigorated my interest in something I hadn’t done since my early 20s. It also made me realise that thinking about how an invented language could work actually helps us to tackle some quite deep questions in both linguistics and in the psychology of language.

To see this, let me invent a small language for you now.

This is how you say “Here’s a cat.” in this language:

Huna lo.

and this is how you say “The cat is big.”

Huna shin mek.

This is how you say “Here’s a kitten.”

Tehili lo.

Ok. Your turn. How do you say “The kitten is big.”?

Easy enough, right? It’s:

Tehili shin mek.

You’ve spotted a pattern, and generalized that pattern to a new meaning.

Ok, now we can get a little bit more complicated. To say that the cat’s kitten is big, you say

Tehili ga huna shin mek.

That’s it. Now let’s see how well you’ve learned the language. If I tell you that the word for “tail” is loik, how do you think you’d say: “The cat’s tail is big.”?

Well, if “The cat’s kitten is big” is Tehili ga huna shin mek, you might guess,

Loik ga huna shin mek

Well done (or Mizi mashi as they say in this language!). You’ve learned the words. You’ve also learned some of the grammar of the language: where to put the words. We’re going to push that a little further, and I’ll show you how inventing a language like this can cast interesting facts about human languages into new light.

The fragments of the constructed language you’ve learned so far have come from seeing the patterns between sound (well, actually written words) and meaning. You learned that cat is huna and kitten is tehili by seeing them side by side in sentences meaning “Here’s a cat.” and “Here’s a kitten.”. You learned that the possessive meaning between cat and kitten (or cat and tail) is signified by putting the word for what is possessed first, followed by the word ga, then the word for the possessor.

This is a little like how linguists begin to find out how a language that is new to them works. I’ve learned how many languages work in this way: by consulting with native speakers, finding out the basic words, seeing how the speaker expresses whole sentences, and figuring out what the patterns are that connect the words and the meanings. This technique allows you to discover how a language functions: what its sounds and words are, and how the words come together to make up the meanings of sentences.

Now, how do you think you’d say: “The cat’s kitten’s tail is big.”?

You’d probably guess that it would be:

Loik ga tehili ga huna shin mek.

The reasoning works a little like this: if the cat’s kitten is tehili ga huna, and the kitten is the possessor of the tail, then the cat’s kitten’s tail should be loik ga tehili ga huna. Similarly, if the word for “tip” is mahia, then you should be able to say

Mahia ga loik ga tehili ga huna shin mek.

That’s a pretty reasonable assumption. In fact, it’s how many languages work. But say I tell you that there’s a rule in my invented language: there’s a maximum of two gas allowed. So you can say “The cat’s kitten’s tail is big.”, but you can’t say “The cat’s kitten’s tail’s tip is big.” My language imposes a numerical limit. Two is ok, but three is just not allowed.

Would you be surprised to know that we don’t know of a single real language in the whole world that works like this? Languages just don’t use specific numbers in their grammatical rules.

I’ve used this invented language to show you what real languages don’t ever do. I’ll come back in the next blog to how we can use invented languages to understand what real languages can’t do.

We can see the same property of language in other areas too. Think of the child’s nursery rhyme about Jack’s house. It starts off with This is the house that Jack built. In this sentence we’re talking about a house, and we’re saying something about it: Jack built it. The dramatic tension then builds up, and we meet … the malt!

This is the malt that lay in the house that Jack built.

Now we’re talking about malt (which is what you get when you soak grain, let it germinate, then quickly dry it with hot air). We’re saying something about the malt: it lay in the house that Jack built. We’ve said something about the house (Jack built it) and something about the malt (it lay in the house). English allows us to combine all this into one sentence. If English were like my invented language, we’d stop. There would be a restriction that you can’t do this more than twice, so the poor rat, who comes next in the story, would go hungry.

This is rat that ate the malt that lay in the house that Jack built.

But English doesn’t work like my invented language. In English, we can keep on doing this same grammatical trick, eventually ending up with the whole story, using one sentence.

This is the farmer sowing his corn,

That kept the cock that crow’d in the morn,

That waked the priest all shaven and shorn,

That married the man all tatter’d and torn,

That kissed the maiden all forlorn,

That milk’d the cow with the crumpled horn,

That tossed the dog,

That worried the cat,

That killed the rat,

That ate the malt

That lay in the house that Jack built.

This is actually a very strange, and very persistent property of all human languages we know of: the rules of language can’t count to specific numbers. Once you do something once, you either stop, or you can go on without limit.

What makes this particularly intriguing is that there are other psychological abilities that are restricted to particular numbers. For example, humans (and other animals) can immediately determine the exact number of small amounts of things, up to 4 in fact. If you see a picture with either two or three dots, randomly distributed, you know immediately the exact number of dots without counting. In contrast, if you see a picture with five or six dots randomly distributed, you actually have to count them to know the exact number.

The ability to immediately know exact amounts of small numbers of things is called subitizing. Psychologists have shown that we do it in seeing, hearing and feeling things. We can immediately perceive the number if it’s under 4, but not if it’s over. In fact, even people who have certain kinds of brain damage that makes counting impossible for them still have the ability to subitize: they know immediately how many objects they are perceiving, as long as it’s fewer than 4.

But languages don’t do this. Some languages do restrict a rule so it can only apply once, but if it can apply more than once, it can apply an unlimited number of times.

This property makes language quite distinct from many other areas of our mental lives. It also raises an interesting question about how our minds generalize experience when it comes to language.

A child acquiring language will rarely hear more than two possessors, as I document in my forthcoming book Language Unlimited, following work by Avery Andrews. Why then do children not simply construct a rule based on what they experience? Why don’t at least some of them decide that the language they are learning limits the number of possessors to two, or three, like my invented language does?

Children’s ability to subitize should provide them with a psychological ability to use as a limit. They hear a maximum of three possessors, so why don’t they decide their language only allows three possessors. But children don’t do this, and no language we know of has such a limit.

Though our languages are unlimited, our minds, somewhat paradoxically, are tightly constrained in how they generalize from our experiences as we learn language as infants. This suggests that the human mind is structured in advance of experience, and it generalizes from different experiences differently, and idea which goes against a prevailing view in neurosciencethat we apply the same kinds of generalizing capacity to all of our experiences.